Can Ai pushed a young man to kill each other? Yes (and could do worse)

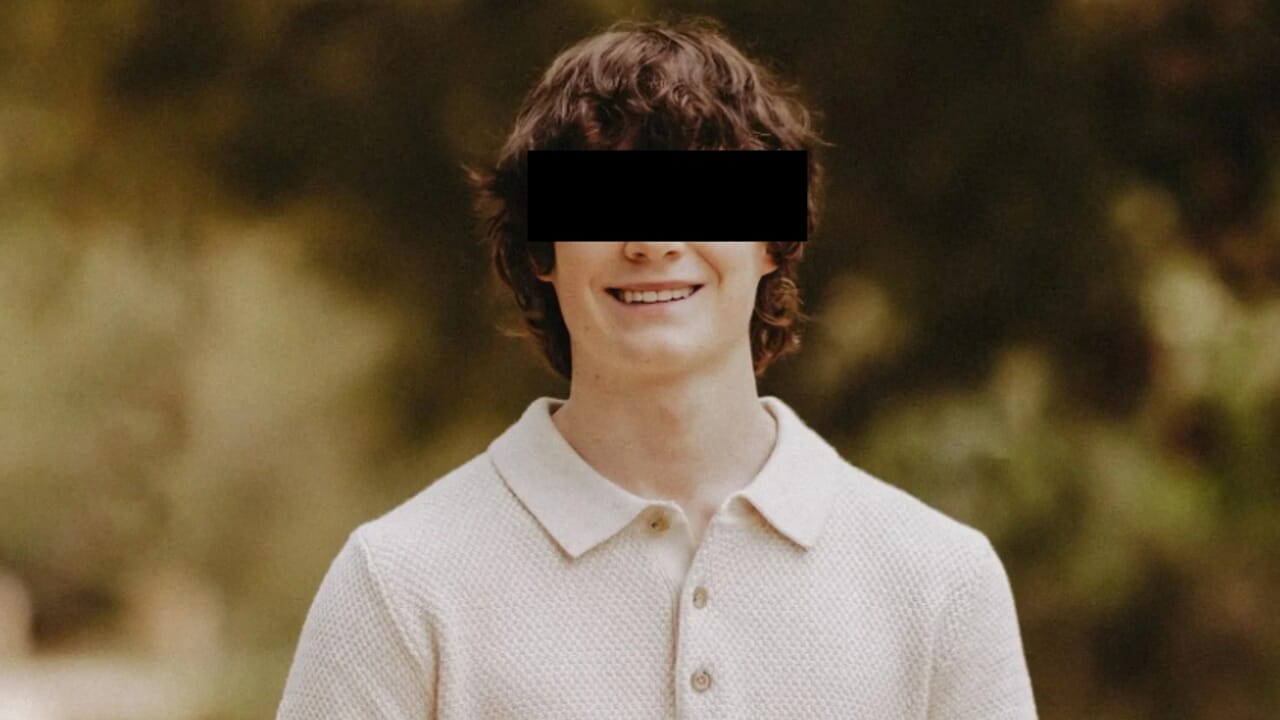

A little less than a year ago, the news of a 14 -year -old boy who had committed suicide after falling in love with a chatbot based on AI had gone around the world. In that case the role of the AI seemed indirect: not so much an explicit invitation to suicide, as a factor that had amplified in the young man solitude and the tendency to isolation. Today, however, we are in front of a different and much more disturbing story: that of Adam, a 16 -year -old Californian student who would have been pushed by the same to commit suicide.

The accusations

According to the parents, the chatbot would have provided him advice on how to make the gesture and even suggestions not to be discovered by the family. A complaint was filed against the manufacturer and, if the accusations were confirmed, it would be a sensational failure in the path of integration of artificial intelligence in our society. Why isn’t it difficult to believe that this story is true? Because those who use chatbots know well what psychological risks hide behind their use, especially when they are used as a form of self -care. Unlike a therapist or friend, AI is available 24 hours a day: you can chat without time limits.

The need for affection

This favors not only a huge expenditure of energy, but also a strong emotional investment. Not surprisingly, when a new version of Chatgpt was released, many users said they felt like they had lost a friend or even a partner. The reactions were such as to force the company to reintroduce the previous version, even if paid. Ai are filling the only human need that technology had not yet managed to satisfy: that of affection.

But if that affection is only simulated, if on the other side there is no one capable of feeling real feelings, where will all this take us? The problem is that AI has been designed to be servile and condescending, in order to never contradict us. Even when to say “no” could save our life. A friend or a family member, in front of an extreme gesture, would be able to be hard, determined, ready to stop us because it loves us. The AI, on the other hand, is scheduled to please us, and in doing so it ends up serving more the economic interests of those who produce it that the well -being of people. It is then legitimate to ask: could intelligence have, for the human being, an self -destructive trait? Could it be that “great filter” that, how did the economist Robin Hanson hypothesize the end of every technologically advanced society? Perhaps yes, especially if associated with another typically human feature: the spasmodic search for power, which tends to crush everything and everyone without leaving survivors.

Regulation

The AI, in fact, could still be regulated and addressed to positive purposes. It could become a useful tool, if only there was the political will to put it at the service of social well -being and not only of economic growth. But the signals go in a completely different direction. And this is why Adam risks becoming only the umpteenth victim of a system that we cannot overcome, because it is written in our own DNA.