THE data we enter on ChatGPT they may not be entirely secret. In messaging apps, like WhatsApp, we are used to seeing the warning “Messages are protected by end-to-end encryption”. This means that no one except the sender and recipient can read them. With ChatGPT, and in general with other AI-based chatbots, it doesn’t work like that. Conversations with ChatGPT they are not encrypted end-to-end, they come stored and monitored by OpenAI, the company that develops it, to identify potential dangerous behavior and could be delivered and used in court if the authorities requested it.

In general, therefore, a good rule to be really safe is to consider AI as a public forum and not to write anything that we would not make visible to everyone.

Who can see chats with ChatGPT

During an episode of the “This Past Weekend” podcast, Sam Altman said:

If someone confides their most personal matters to ChatGPT and it leads to legal proceedings, we may be forced to hand over the chat.

Unlike doctors, therapists or lawyers, companies that operate chatbots are not bound by professional secrecy. OpenAI also specifies this clearly in its official documentation: the conversations are analyzed automatically and, if they are detected behavior that violates policies (e.g. intentions to harm other users), content may be forwarded to a specialized human team. This team can evaluate the situation and, in more serious cases, block the account and report it to the police.

At the moment, OpenAI has chosen not to automatically report cases of self-harm, to protect the confidentiality of particularly sensitive conversations, but the fact remains that all messages written in active chats (those visible in the side history) are preserved. The only conversations automatically deleted within 30 days are temporary ones (which can be activated by clicking on the dotted balloon icon at the top right) and those that the user deletes manually.

Chat sharing and privacy incidents

In most cases, however, it is not security flaws that make conversations public, but the users themselves. Chat privacy has been compromised several times by superficial or careless sharing.

When a conversation with ChatGPT is particularly useful, funny or interesting, it can be shared: just select it from the sidebar, click on the three dots next to the title and then on “Copy link”. Alternatively, you can publish it directly on social media using the dedicated buttons. But be careful: once a link is generated, anyone who owns it can access the content. It is not possible to limit its visibility to a limited number of people.

Carelessness during chat sharing has already caused several problems. Until mid-2025, there was also an option (now removed) to make chats “searchable” on Google. Many userswithout realizing it, they have made personal conversations publicthen considered as normal web pages by search engines. The chats posted were often innocuous: inquiries about bathroom renovations, astrophysics questions, kitchen recipe ideas. In some cases, however, they contained sensitive or problematic data: detailed resumes, psychological problems and even requests related to problematic online communities.

After this incident, OpenAI removed the “Make Searchable” option and announced new measures to make the safer sharing. However, a simple rule remains valid: before sharing a chat with anyone, it is good to ask yourself if you are really ready to make it public.

What if we want to know what ChatGPT knows about us?

Anyone who uses ChatGPT has the possibility, guaranteed by the General Data Protection Regulation (GDPR), to know what information has been collected about them and how it is managed. OpenAI, to respond to this right, has made a dedicated portal available.

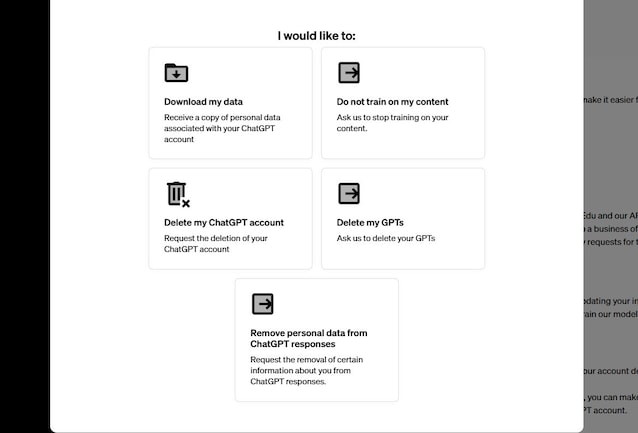

After logging in with your account, you can click on “Make a Privacy Request” (top right) and choose between different options. The portal is available for users without a subscription and for those who have a Plus subscription or Probut not for Business, Edu or Enterprise profiles.

Through this tool, you can:

- Download your personal datato see exactly what has been preserved. It must be said, however, that the current format in which the data is delivered is not easily readable and could be a little difficult for those who are not familiar with different file formats;

- Request that your chats no longer be used for model training. This request applies only from that moment on: if the data has already been used, it will not be removed retroactively;

- Delete your account and remove any custom GPTs;

- Request removal of personal information from ChatGPT responses. By indicating conversations in which our sensitive data is provided to other users, we can request that ChatGPT stop providing it.

Although OpenAI offers tools to control your data, the most effective rule remains that of prudence. It is best to avoid posting in chats personal information, passwords, banking details, medical reports or other sensitive content. Now that OpenAI has announced plans to introduce advertising within conversations, basing them on the contents of individual chats, the less data we share, the less we are exposed to risks.

ChatGPT is a powerful tool, but it’s less private than we think. It’s good to keep this in mind every time we open a new conversation.