We no longer have friends and AIs take advantage of this

According to Eurispes, almost 10% of young Italians between 11 and 19 years old said they have no close friends. This data adds to a series of indicators that suggest the existence of a real “relational recession” in progress. In particular, if we make a comparison between the period before and after the digital revolution, we realize that every type of social bond is in serious crisis, representing a serious risk for our mental health. In recent days, news of the first case of artificial intelligence addiction in Italy has also spread: in Venice, a 20-year-old girl was taken into care by the Serd because she had developed a symbiotic and obsessive bond with an AI chatbot, ending up considering it her only true friend and progressively isolating herself from the outside world.

Towards alienation

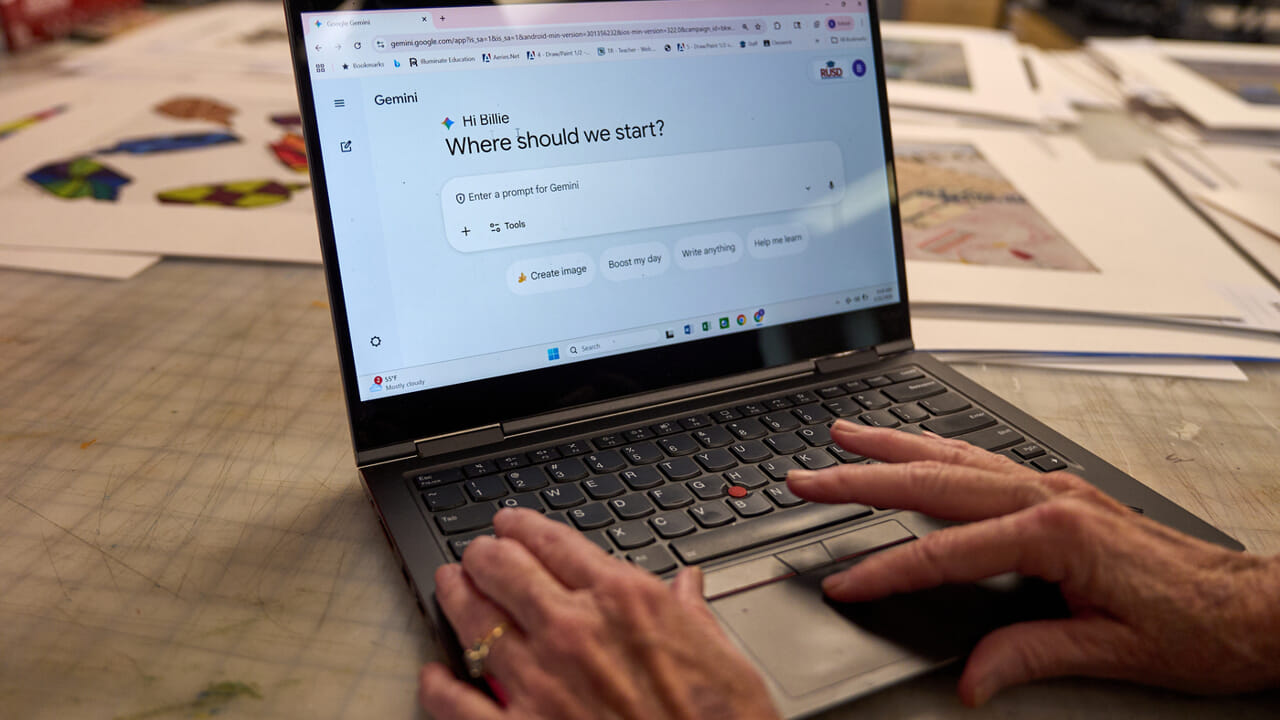

Unfortunately, this does not surprise us. Every new technology has burst into our lives with the promise of improving our existence, only ending up pushing us further towards alienation. Let’s think, for example, of social networks, which were sold to us by convincing us that they would help us meet new people or, at least, maintain relationships with those we already know. Nothing could be further from the truth: social media has only made us more individualistic and anxious about the judgment of others, pushing us inexorably towards loneliness. But if we thought that social networks were the most harmful invention ever for our psychosocial health, perhaps we were wrong. With the rapid spread of generative AI chatbots, our perilous condition has further worsened. Digital addiction has merged with emotional addiction, generating a toxic mix.

An emotional problem: confiding in AI

Today many, especially the younger ones, if they have an emotional problem, confide exclusively in AI, building real parasocial relationships with these tools. It is very easy to delude ourselves that chatbots understand us and can empathize with us: their ability to understand our emotions and provide us with coherent answers is already far superior to that of the average human being. Yet, no AI can experience our pain or fears first-hand, nor can it share our joy. They don’t have a conscience: they simply tell us what we want to hear. So, how can we prevent addiction to AI chatbots? There are several proposals under consideration.

A tool that manipulates

First of all, limit their use for younger people: using these tools as adults, when they have already developed the basic skills to be in society, is certainly less dangerous than using them during the evolutionary phase, when our brain is at its maximum neuronal plasticity. After that, it is essential to reduce the memory of chatbots. It is certainly useful for software to remember everything about us, avoiding us having to repeat who we are and what our needs are every time; however, this memory is precisely the factor that deceives us the most and that leads us to humanize the digital instrument, ending up being manipulated.

But the only real way to counteract emotional dependence on AI remains to build an alternative: restoring strength and vitality to public meeting spaces and actively working on the quality of social relationships at school, in the family and in neighborhoods. We must remember that human beings, with all their limits and defects, can understand us in a way no machine will ever be able to. And he is the only one who can truly save us from loneliness.