Gemini 3 Flash was officially released on December 17th last. Google’s new efficient AI-powered model brings the reasoning and understanding capabilities of frontier models to a version optimized for speed of response and computational efficiency. Gemini 3 Flash represents an important step, as it maintains the technological foundations of Gemini 3 Pro, but drastically reduces latency and costs, making it suitable for both everyday use and complex and automated workflows. Let’s take a closer look at them Features of Gemini 3 Flash.

The features of Gemini 3 Flash

After the debut of Gemini 3 Pro and Gemini 3 Deep Think, which took place last month, Google has completed the “family” with Gemini 3 Flashwhich preserves the advanced reasoning abilitythe multimodal understanding (i.e. the ability to interpret text, images, audio and video in an integrated way) and Agentic coding functions adding to all this greater efficiency. The latest model, in fact, is designed to respond faster and consume fewer resourcesdynamically adapting the amount of “thinking” needed depending on the complexity of the task. In everyday cases it uses on average about 30% fewer tokens than Gemini 2.5 Prothus increasing efficiency and precision.

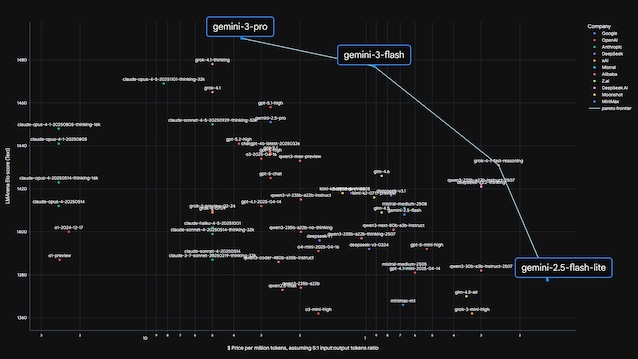

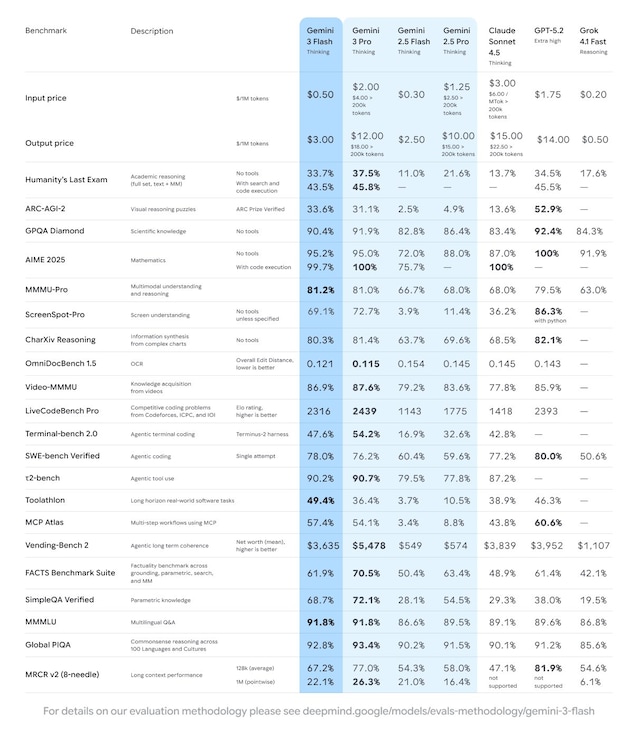

The results on benchmark they help to contextualize these statements. Test like GPQA Diamond or Humanity’s Last Exam they evaluate advanced academic level skills, while MMMU Pro measures multimodal understanding. In all these tests, Gemini 3 Flash achieves scores comparable to those of Gemini 3 Pro and surpasses previous models, demonstrating that reducing costs does not necessarily imply a decrease in quality of output. Another indicator cited by Google is the score Elo by LMArenaa chess-inspired rating system that compares model performance based on user preferences. In this context, Gemini 3 Flash is beyond the Pareto frontieran economic concept that describes the limit beyond which improving one variable, such as speed, leads to a worsening of another, such as quality or price.

Speed remains the most obvious strong point of the model. According to data provided by Google, Gemini 3 Flash is approximately three times faster than Gemini 2.5 Prowith significantly lower costs: $0.5 per million tokens for input and $3 for output. Inference, the ability of an AI model to spot patterns and deduce information from previously unencountered data, has been optimized to support high-frequency streams. Not surprisingly, come on SWE-bench Verifieda benchmark that evaluates the ability to solve real programming problems, Gemini 3 Flash achieves superior results even to Gemini 3 Pro, reaching a score of 78%.

Where is Gemini 3 Flash available

The characteristics of Gemini 3 Flash justify the interest of companies and developers in the new efficient “Big G” model. Platforms like Google AI Studio, Vertex AI and Gemini Enterprise already offer access to the model, while companies like JetBrains, Figma and Bridgewater are using it to accelerate design, analysis and automation processes. For “mere mortals” Gemini 3 Flash is already available in the Gemini app also in the AI Mode of Google search.